The Spring Boot Trap

A junior engineer tasked with handling slow WebSocket clients would reach for Spring’s

@MessageMappingand configure aThreadPoolTaskExecutor:

@Configuration

public class WebSocketConfig implements WebSocketMessageBrokerConfigurer {

@Override

public void configureMessageBroker(MessageBrokerRegistry config) {

config.enableSimpleBroker("/topic")

.setTaskScheduler(taskScheduler()); // Unbounded queue

}

}

This “just works” in development with 10 users. But the framework hides a catastrophic flaw: the unbounded queue. Spring’s SimpleBroker uses a

LinkedBlockingQueuewith no capacity limit. When clients become slow (mobile networks, overloaded devices, packet loss), messages accumulate indefinitely.The production reality: In October 2020, Discord’s Gateway fleet experienced memory exhaustion when iOS clients on cellular networks caused event queues to grow to millions of messages. The JVM heap filled with buffered events waiting for TCP acknowledgments that never arrived. Garbage collection paused for 30+ seconds. The entire shard crashed.

The framework abstraction prevented engineers from seeing the queue depth, allocation rate, or backpressure signals until production monitoring showed 98% heap usage. By then, it was too late.

The Failure Mode: Death by a Thousand Slow Clients

Consider a Gateway handling 100,000 concurrent WebSocket connections. A guild event (message posted in a channel with 5,000 members) generates 5,000 outbound messages. The Gateway must fan out these messages to 5,000 client sockets.

The Naive Approach:

for (User user : guild.getMembers()) {

outputQueue.put(event); // Blocks when full!

}

Why this crashes at scale:

Memory Explosion: Each

LinkedBlockingQueue.Nodeallocates 40 bytes (object header + next pointer + item reference). At 10k messages per slow client × 1000 slow clients = 10M nodes = 400MB just for queue metadata. The actual message payloads add another 2GB.GC Thrashing: The Eden space fills every 2 seconds with new queue nodes. Young GC pauses spike to 500ms. Eventually, nodes promote to Old Gen, triggering Full GC pauses of 5-10 seconds. The Gateway stops responding. Clients timeout and reconnect, creating a death spiral.

Thread Starvation: If using bounded queues with blocking,

queue.put()blocks the event processing thread when the queue is full. One slow client blocks the entire guild’s event delivery.TCP Head-of-Line Blocking: The OS socket send buffer (typically 64KB) fills when the client is slow. The

Socket.write()call blocks or returnsEAGAIN. Without proper buffering, the Gateway must choose: block the thread or drop the message.

The Core Engineering Problem: How do we implement a bounded buffer that:

Never blocks the producer (event processing thread)

Detects when a consumer is slow (backpressure signal)

Avoids GC pressure (zero allocations on the hot path)

Supports lock-free concurrent access (no synchronized blocks)

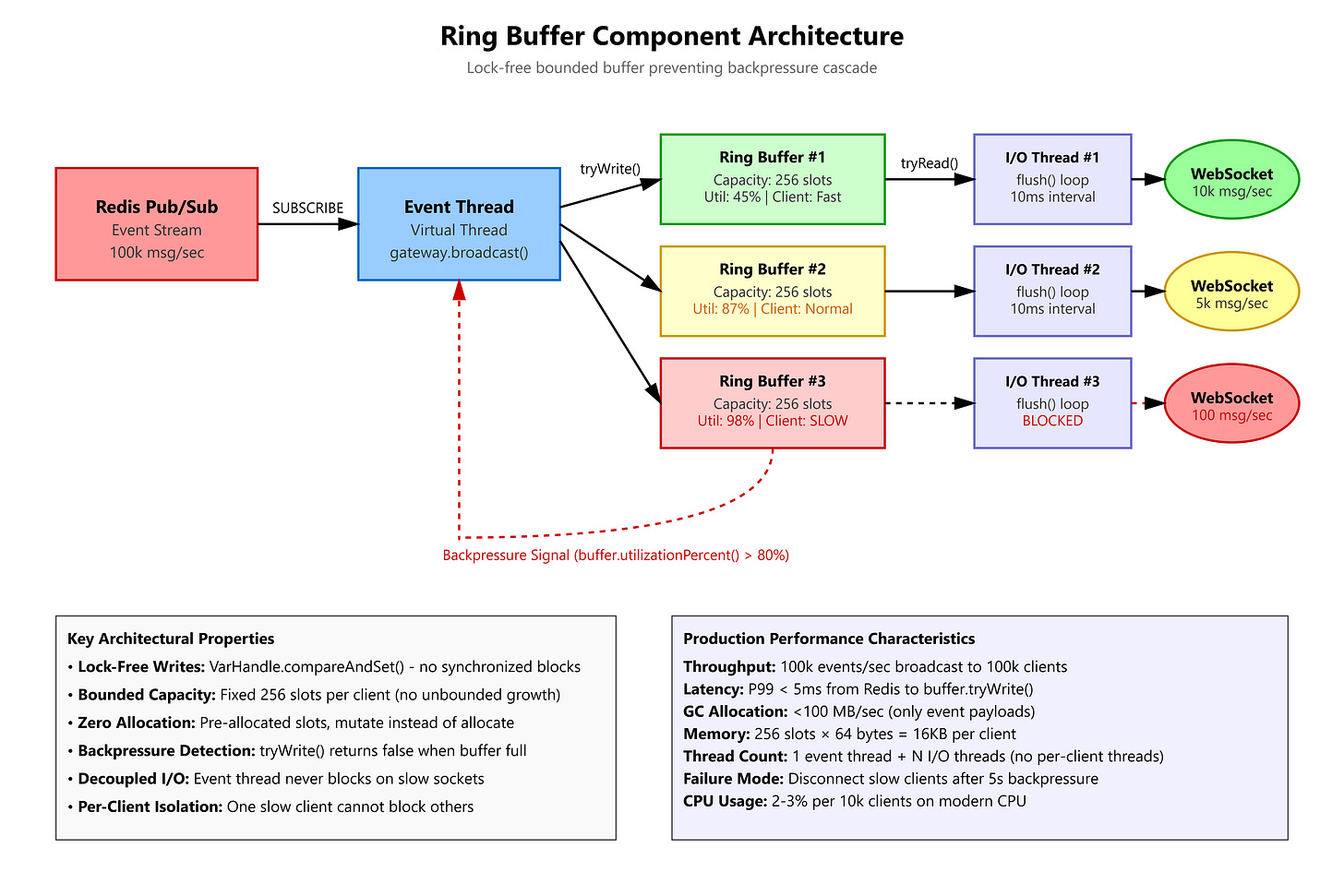

The Flux Architecture: Ring Buffers as the Shock Absorber

A Ring Buffer (circular buffer) is a fixed-size array where writes wrap around using modulo arithmetic. It provides O(1) enqueue/dequeue with bounded memory and zero allocations after initialization.

Key Properties:

Fixed Capacity: Allocated once at client connection time (e.g., 1024 slots)

Head/Tail Pointers: Track write position (head) and read position (tail)

Lock-Free: Use VarHandle CAS operations for atomic updates

Overflow Detection: When

(head + 1) % capacity == tail, the buffer is full

Architecture Integration:

Redis Pub/Sub → Event Thread → Ring Buffer (per client) → I/O Thread → WebSocket

↓

VarHandle.compareAndSet(head, expected, newHead)

↓

if (full) → emit_backpressure_metric() → policy.handle(DISCONNECT)

The Ring Buffer acts as a shock absorber between the fast event stream (100k msg/sec from Redis) and the slow client socket (1k msg/sec on 3G). It absorbs bursts while providing a clear backpressure signal when the client cannot keep up.